In-Lab Studies

Babies’ & Children’s Perception of People’s Talking Faces

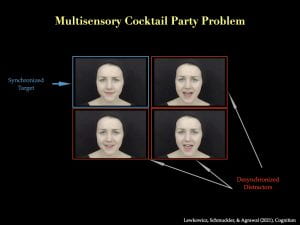

Oftentimes, babies and young children find themselves in situations where they see and hear multiple people talking. This could be at a birthday party, a family gathering, or a classroom full of other kids and adults. Regardless of which place they happen to be, they must be able to correctly combine each person’s voice with that person’s face to perceive each person as a unique person. How this ability develops and what specific skills are required for its development is the topic of our current studies (for our previous studies that have led up to the current ones, please see here). To address our main research question, we present different videos of talking faces and simply record how and where your baby or child directs his or her attention! Participation in this study involves no more than a 45-minute visit to the LLAMB Lab.

Oftentimes, babies and young children find themselves in situations where they see and hear multiple people talking. This could be at a birthday party, a family gathering, or a classroom full of other kids and adults. Regardless of which place they happen to be, they must be able to correctly combine each person’s voice with that person’s face to perceive each person as a unique person. How this ability develops and what specific skills are required for its development is the topic of our current studies (for our previous studies that have led up to the current ones, please see here). To address our main research question, we present different videos of talking faces and simply record how and where your baby or child directs his or her attention! Participation in this study involves no more than a 45-minute visit to the LLAMB Lab.

How does the infant brain predict expected events?

This study of 6- to 12-month-olds asks how the infant brain responds to a picture that has been paired with a sound. Infants view a series of photographs of a cartoon character. Just before each cartoon is presented, there is a unique sound that predicts the appearance of the cartoon. Then on a few test trials, the sound is presented but the cartoon is not. The unexpected ABSENCE of the cartoon, despite being predicted by the sound, triggers a response in the visual part of the brain. We are testing a new device that measures this brain response using invisible light that can detect very small changes in the amount of oxygen in different regions of the brain. The infant wears a cap with small sensors that measure these changes. The entire experiment lasts about 15 minutes, plus a few minutes to place the cap on the infant’s head.

How does the brain respond to a story as it unfolds?

This study is being conducted using the same set of sensors that measure changes in oxygen described in the previous experiment. We are testing adults, children, and infants as they view short movie clips from the commercial film “Despicable Me”. Our goal is to understand how the language networks in the brain develop across age and experience with English. Some of the movie clips are in English, some in Spanish, and some are modified to make them less intelligible. As we become more experienced in understanding English, we expect these language networks to become more complex and more sensitive to differences in the audio content of the movie clips. The entire experiment lasts about 30 minutes, plus a few minutes to place the cap on the participant’s head.

Online Studies

We are currently conducting several online studies that you are welcome to participate in:

Human Face Preference: https://lookit.mit.edu/studies/f196033f-4785-4e0d-b87a-a579573f5f4b/

Human Face Preference: https://lookit.mit.edu/studies/f196033f-4785-4e0d-b87a-a579573f5f4b/

Bouncing Balls: https://lookit.mit.edu/studies/3a20ec18-f701-4c0e-83c9-5961d451c6e1/

Bouncing Balls: https://lookit.mit.edu/studies/3a20ec18-f701-4c0e-83c9-5961d451c6e1/